Debugging Like A Boss!

Perhaps no other topic demonstrates the core values of Intentional Logic than debugging. If you are an engineer of any type, or a student of engineering, then you’ve debugged. But have you given the process of debugging much thought? Most people don’t, or if they do it doesn’t go much beyond being methodical. Being more intentional about debugging is key to doing it better, faster, and with less frustration.

This article is all about abstracting the concepts of debugging, and thinking about them one or two levels higher. This allows you to consider aspects of debugging that you probably didn’t consider.

Fundamentals

Fundamentally, debugging is fixing a problem when everything is potentially broken and you don’t know anything.

Literally, everything could be wrong or broken. It includes, but is not limited to: user actions and reports, all documentation, test setups, the test itself, measurement equipment, the device under test, and the coworker standing next to you. Especially your coworker— he’s kind of creepy.

It might go without saying that you don’t know everything about the problem. If you did, it would be called “fixing” and not “debugging”. It is important to start off on the right foot by assuming that you know even less than you do. Odds are that your assumption will be true!

Key Concept

Debugging is all about gaining and validating knowledge. Everything you do in debugging should be focused on learning something, or figuring out if what you learned is correct. You start with lots of unknowns, and you do a test to gain some knowledge. You do another test to validate that knowledge. Rinse and repeat, until you fix the problem.

The greatest enemy of knowledge is not ignorance; it is the illusion of knowledge. —Daniel J. Boorstin (Often falsely attributed to Stephen Hawking)

Thinking you know something and being wrong is much worse than knowing that you don’t know it in the first place. If you think you know it, then you tend to not do any self-improvement and self-correction. Much of debugging (and life!) should be around validating the knowledge that you have.

Before doing a debugging step, you have to ask yourself, “What knowledge will I gain by doing this?” And, “How is this knowledge going to help me?” Not only does this keep you focused on gaining knowledge, but it helps you avoid doing things that ultimately won’t help you fix your problem.

You Are Flawed

Humans are massively flawed, and we are mostly blissfully ignorant of that fact. Going into detail of this fact is way beyond the scope of this article, but I’ll briefly cover the highlights.

For starters, your memory is horrible. Not only do you forget things, but your brain makes up false information to fill in the gaps. This old episode of Scam School shows this. This is why personal testimony is (for the most part) not valid evidence in a scientific study. But what does this say about user reports of bugs? They are not to be trusted!

The human senses are inaccurate at best. Your eyes have floaters, blind spots, blinking, and other issues. Your ears have the audio equivalent of floaters and blind spots. Here again, your brain often makes up information to fill in the gaps in your senses! That’s why optical and audible illusions are a thing. Just because you saw or heard something doesn’t mean it’s true!

Humans are inherently pattern-seeking. We look for and see patterns in everything, even when there is no pattern there. In prehistoric times, we evolved to associate a rustling of leaves as a lion in the bushes. In that scenario, it is in our benefit to have a false-positive— thinking that there is a lion when one is not there. But in modern times this is a problem. If taken to extremes, conspiracy theor ies are born. In debugging, we also see patterns everywhere. It is important that we validate those patterns to avoid chasing a problem that isn’t there.

ies are born. In debugging, we also see patterns everywhere. It is important that we validate those patterns to avoid chasing a problem that isn’t there.

The term for seeing patterns when there is none is Apophenia. The more you know!

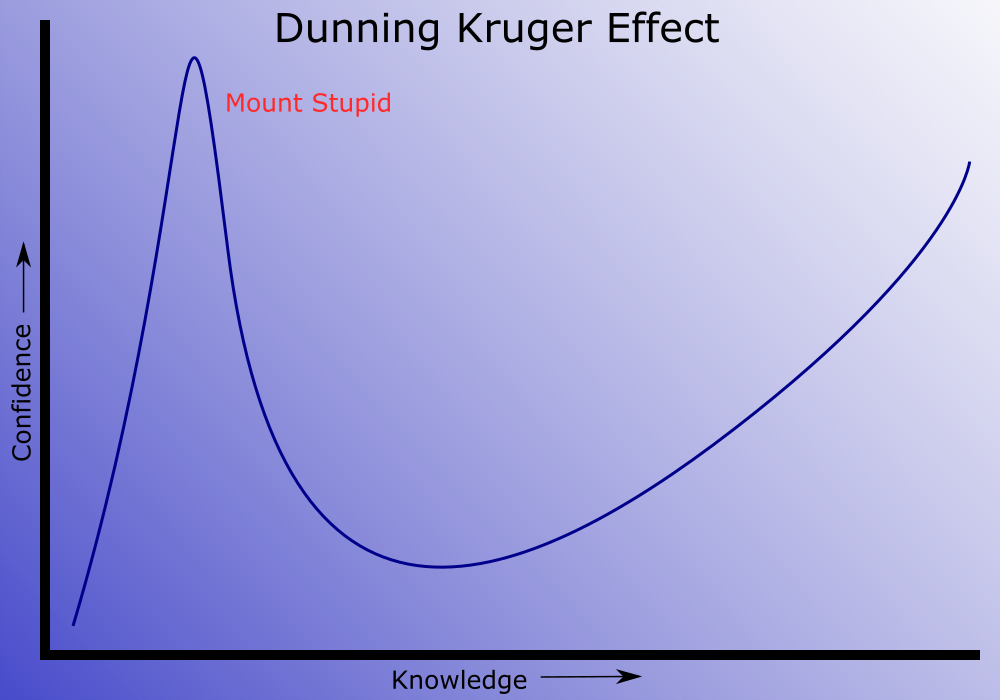

The Dunning-Kruger Effect is a real problem. Simply put, as our knowledge grows about a topic, our confidence grows out of proportion. Then (hopefully) we realize that we really don’t know as much about it and our confidence falls. That initial peak of confidence is sometimes called Mount Stupid. Some people never climb down the backside of Mount Stupid. It is important to know about and anticipate the Dunning-Kruger effect and not be overly confident.

Critical Thinking

So now what? Your job is to gain and validate knowledge, but your senses and cognitive ability are massively flawed. How do you get past this?

There is a whole field out there called “Critical Thinking”. The term critical thinking is not well defined. It is both a philosophical concept (from Socrates, Plato, and others) and a type of mathematics (predicate logic) and everything in between. I prefer to use the vague definition of “anything that helps you gain and validate knowledge while taking into account human failings”.

You probably already have some (or know about some) critical thinking skills. The scientific method is all about being methodical. Most people know that in debugging you need to make the problem repeatable. You might also know to change only one variable at a time. All of these are important parts of critical thinking, but only a small part.

A good place to start when learning critical thinking skills is with Logical Fallacies. A logical fallacy is an informal way to identify faulty logic. One common logical fallacy is “Correlation does not equal causation”. Just because two things are correlated does not mean that one caused the other. Another one is the “Appeal to Nature”. Just because a food or drug is all natural does not mean that it is good for you.

We all suffer from biases. But when looking at evidence we all tend to believe things that support our biases and ignore evidence that doesn’t. This is called confirmation bias. Our lives are full of confirmation bias. Not only is it all around us, but we do it ourselves on a regular basis. That is why: The more you believe something to be true, the more you should question its authenticity! That’s a good rule for life. It works well for debugging, but should also apply to your political and religious views as well.

I would rather have questions that cannot be answered, than answers that cannot be questioned. —Richard Feynman

There are a lot of logical fallacies, and it is worth becoming familiar with some of them. Go here to download a free posters on logical fallacies and biases.

Everything Is Suspect

Now that you suspect yourself as a solid anchor in reality, you should also suspect everything else. Obviously the device under test (DUT) is suspect. But…

Start with whoever reported the bug. They are reporting the bug in the best way they can, but that is usually inadequate. Their memory and senses are flawed, and they interpret their experiences through their own rose-colored glasses. While their report is a start, you need to quickly stop using their report as a guide in your debugging efforts. The best way to do this is to reproduce the bug. Once it is reproducible, their report is no longer needed.

Once you have a reproducible bug, you have a test setup that consists of the DUT, the other test gear (o-scopes, signal generators, etc.), and yourself. You already suspect the DUT and yourself, but now you should suspect the test gear. Is your signal generator working properly? Is your o-scope measuring the right thing? Are you even doing the correct test?

Constantly do sanity checks. These are small tests just to make sure that what you think you know is actually correct.

Know What To Ignore

Just as important to knowing something is knowing what you can safely ignore. In fact, most of the early experiments in a debugging session should be focused on figuring that out. Another way to phrase this is to “eliminate variables”. Knowing what to ignore is super important, because it limits the scope of where the bug can be.

After making a bug reproducible, most people try to simplify the test. To find the simplest test that still reproduces the bug. This is essentially an exercise in figuring out what to ignore.

As you go through the debugging process, don’t slack off on this. Don’t ever be satisfied that your test setup has been simplified enough.

Predict The Future

When running any test, it is important to predict what the outcome of the test will be. If you are taking an o-scope picture, predict what the o-scope will show. Whatever the test, predict what it will do. There are two very important reasons for doing this: 1. It verifies your ability to interpret your test results. And 2. It predetermines how to interpret the test results.

Failing to do this can result in anomaly hunting. Anomaly hunting is basically blindly poking around and looking for something that doesn’t seem right, and then assigning a value to it. Anomaly hunting is bad because it wastes time, promotes seeing patterns that are not there, and does not help you gain knowledge. Anomaly hunting is a sure sign that someone is doing it wrong.

Heuristics

Heuristic is a big word for a simple concept. A heuristic is simply a shortcut in logic, or a rule of thumb that is accurate most of the time, but not all of the time. For example, “Where there is smoke, there is fire”, is a heuristic. Logical Fallacies are another type of heuristic.

Heuristics can speed up debugging, but it is important to remember that they are not technically valid forms of logic. You can use heuristics, but always be aware of when and how they don’t apply.

If you use a heuristic to get what appears to be a correct answer, you often have to go back and use valid logic and reasoning to validate the answer. Because…

If You Don’t Know Why The Problem Went Away…

…then the problem isn’t fixed. And a problem that isn’t fixed will very likely come back to bite you at the worst moment. Usually at a demo in front of important stakeholders or when the unit has been deployed with an important customer. Whenever it happens, it will be super embarrassing for you and your employer!

This happens a lot. You are working on a bug and the bug stops happening. You don’t know why, but something that you did made the bug “go away”. Really it hasn’t gone away, you just stopped being able to reproduce the problem. The bad debugger would stop and claim the problem was fixed. But the good debugger would try to retrace their steps and make it reproducible again.

Humbling Experience

Hopefully by now you have realized that much of debugging is a humbling experience. Assume that you know less than you actually do. Constantly verify that your knowledge is correct, etc. Add to that that you will make spectacular mistakes.

An arrogant person makes for a horrible debugger— and thus a horrible engineer.

Tying It All Together

When debugging, you not only need to think about the problem at hand, but you need to think a level or two higher. Think about gaining and validating knowledge. Be strategic about what knowledge you seek, and aggressively validate it. Pursue knowledge that helps your efforts, and identify knowledge that you don’t need.

Do this and not only will your debugging productivity increase, but the knowledge you gain will make you a better engineer!